Madhavan Mukund

Teaching

Advanced Mining Learning,

Sep-Dec 2021

Advanced Machine Learning

Sep–Dec, 2021

Assignment 1: DNNs and CNNs

31 October, 2021

Due 14 November 19 November, 2021

The Task

-

In Lectures 4 and 6, we explored DNNs and CNNs for MNIST. By scrambling the images, we observed that DNNs do not seem to use visual information, wheras CNNs do.

Try a similar experiment with the following datasets and report your findings.

-

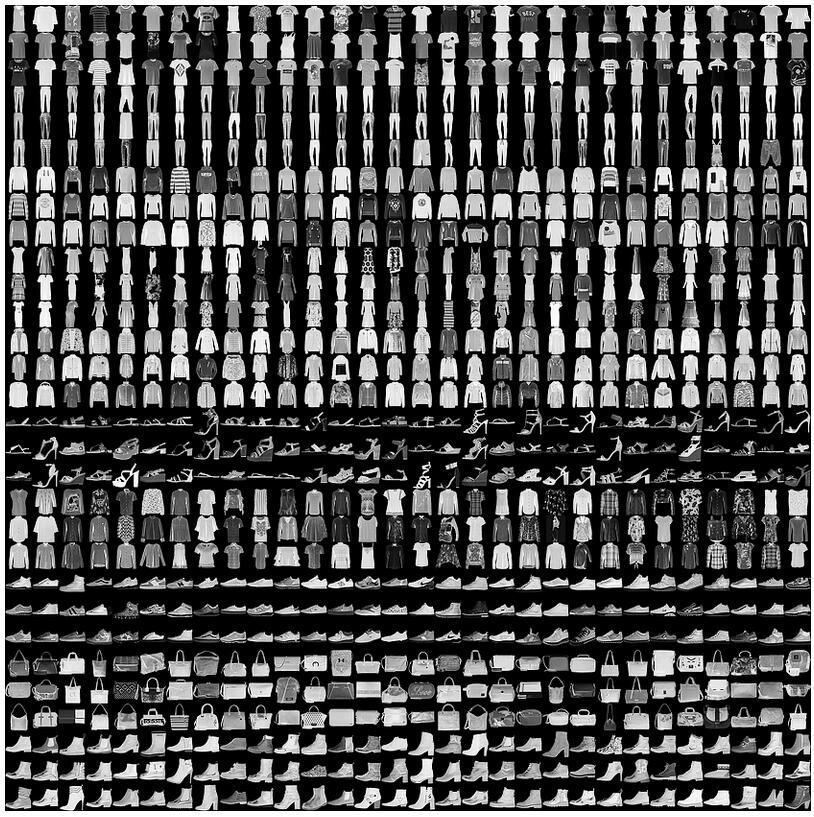

The Fashion MNIST Dataset contains 60,000 training images and 10,000 training images, each 28×28 pixels, representing ten classes of clothing. Here are examples of the images.

-

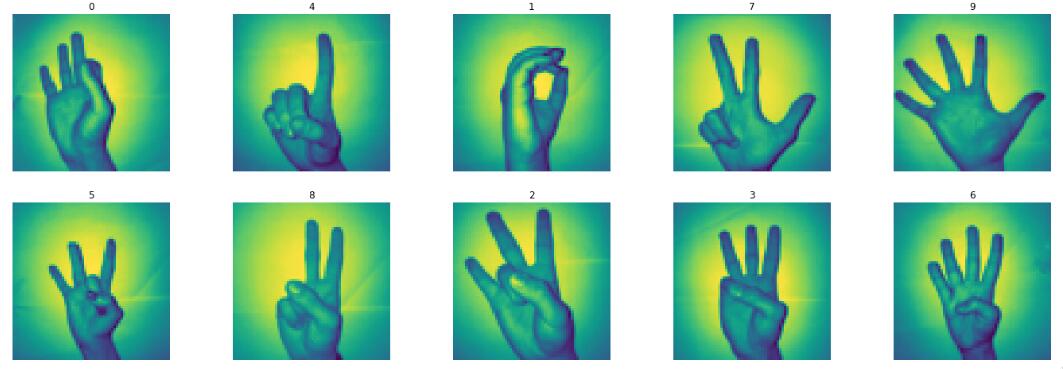

The Sign Language Digits Dataset contains 2062 64×64 pixel images of the digits 0 to 9 represented using sign language. Here are examples of the images.

-

-

The parity function takes as input a vector of 2n bits and checks if the number of 0's (and 1's) in the input is even. It is more convenient to think of the input as a vector (x1, x2, …, x2n) where each xi ∈ {-1,+1}. The parity function then reports the product x1x2…x2n, which is +1 if the parity is even and -1 if it is odd.

-

Try to train a DNN for the parity function on 64-bit inputs. Use randomly generated training sets of sizes 2000 and 5000 and test the results on a suitable validation set.

-

Manually design a good network for the parity function and compare its results with respect to the DNNs that were learned from training data.

-

Solving the Task

-

Submit three separate Python notebooks that contain your code and the outcomes of your experiments, with suitable explanations.

-

One with the DNN vs CNN experiments for the Fashion MNIST and Sign Language Digits datasets.

-

One with the DNNs that learn the parity function from training data.

-

One with the manually constructed network for the parity function.

-

-

You may use Kaggle or Colab for this. In this case, you can also submit a link to your notebook via Moodle.

You may work alone or in groups of two. Each group makes a single submission to Moodle. Use either person's Moodle account to submit. The submission should mention the names of the two partners.